When Greg Brockman sat down in the Big Technology Podcast studio, OpenAI had just completed three major tasks: shutting down Sora, securing $110 billion in funding, and completing pre-training of the next-generation model Spud. Shutting down a star product, securing a massive funding round, and betting on a new model—all happening simultaneously, each requiring an explanation. Over the next 90 minutes, this OpenAI co-founder and president faced a challenging task: to repackage every seemingly passive decision as a forward-looking strategic choice.

Did he succeed? For the most part, yes, but within the narrative gaps lie some clues worth examining closely.

Brockman has primarily overseen the company’s “Scale” department for the past 18 months, managing GPU infrastructure, data centers, and supply chains. This interview covered an extremely wide range of topics: from why Sora was shut down, to what the Super App looks like, to what the next-generation model can do, to how the $110 billion will be spent, to why he donated $25 million to MAGA Inc.

Original video: https://www.youtube.com/watch?v=J6vYvk7R190

-

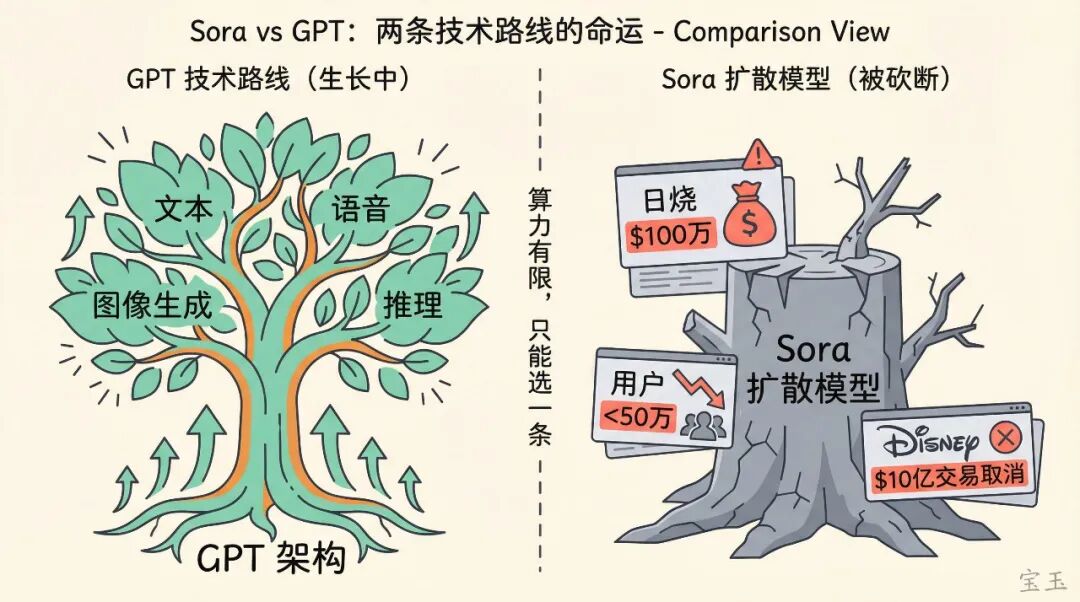

1. Was shutting down Sora “focusing on the core technology” or a move to stop the bleeding? Brockman said Sora and GPT are different branches on the technology tree, and with limited compute, they had to choose one. However, he didn’t mention the reality of Sora burning a million dollars a day and its user base dropping below 500,000. -

2. The Super App will merge ChatGPT, the coding agent Codex, and the browser Atlas into a unified application, expected to be delivered within the next few months. However, this vision directly overlaps with Microsoft 365 Copilot, a question no one asked during the interview. -

3. The next-generation model Spud incorporates about two years of research breakthroughs. Brockman claims it will simultaneously raise the ceiling and floor of AI capabilities, but the descriptions of its abilities are all qualitative, lacking specific metrics. -

4. Self-assessed AGI progress at 70-80%, but Brockman also said the definition of AGI is “more like a vibe.” How can something that cannot be precisely defined be precisely quantified? He did not resolve this contradiction. -

5. Admitted to lagging behind Anthropic in the “last-mile usability” of coding tools, claimed to have caught up, but third-party data shows Anthropic’s enterprise market share is still growing rapidly. -

6. Responded to Anthropic CEO Dario Amodei’s criticism that OpenAI’s infrastructure investment is “too aggressive”: “I disagree.” But did not address Amodei’s specific argument about how “a one-year revenue forecast error could lead to bankruptcy.”

Shutting Down Sora: A Technical Choice or a Commercial Move to Stop the Bleeding?

Kantrowitz opened by asking: OpenAI is leading in the consumer market, why suddenly shift resources away from video generation?

The explanatory framework Brockman provided was quite different from external speculation. He said Sora’s model and the GPT series are “completely different branches on the technology tree”, fundamentally different in construction. In a world of limited compute, advancing two technical paths simultaneously comes at a great cost. He used a vector analogy:

The sum of random vectors is zero; progress requires aligning directions.

Scattered forces cancel each other out; concentration creates momentum. He believes the problem in deep learning is not a lack of opportunities, but too many, and spreading investments means not getting far in any direction.

This explanation is self-consistent in technical logic but tells only half the story. According to WSJ investigations and TechCrunch reports, Sora had already failed commercially: its user base rapidly dropped from a peak of one million to less than 500,000, burning about $1 million in compute costs daily. According to the WSJ, Disney’s planned $1 billion investment and character licensing deal for Sora was also canceled, with Disney being informed less than an hour before the public announcement. Shutting down Sora had both the logic of technical focus and the practical pressure of commercial damage control. Brockman chose to discuss only the former.

He emphasized this is not “shifting from consumer to enterprise,” but a necessary choice. He believes the two most important applications today are: a personal assistant that understands you and aligns with your goals, and an AI that can solve difficult problems for you. OpenAI’s existing compute isn’t even enough for just these two things.

A technical detail: The image generation feature within ChatGPT is unaffected. Image generation is based on the GPT architecture, belonging to the same technical path as text and voice; Sora used a diffusion model, which is something from a different tree. The Sora research team was not disbanded but shifted to world simulation research for robotics.

Kantrowitz asked again: Google DeepMind’s Demis Hassabis believes the closest thing to AGI is not text models but image generators, because they must understand interactions between objects and how the world works. By abandoning this path, is OpenAI betting on the wrong track?

Brockman said this is a real risk: “Absolutely. In this field, you have to make choices, you have to place bets.” But he then pivoted, saying OpenAI is confident because “we have seen the path to AGI.” He gave an example: a physicist gave a long-unsolved problem to OpenAI’s model and received a solution 12 hours later; the physicist said it was the first time he felt the AI was “thinking.” However, Brockman did not reveal which physicist or which problem, making this case impossible to independently verify. [Note: Public information shows mathematician Terence Tao published a paper in March 2026 with core proofs completed by ChatGPT Pro, but that is in mathematics and does not directly correspond to the description here.]

Super App: A Grand Vision, But Who Will Answer the Microsoft Question?

<p style="margin: 1.5em 8px;letter-spacing