Harnessing Claude’s Intelligence | Three Core Patterns for Building Apps [Translated]

Original: Harnessing Claude’s Intelligence | 3 Key Patterns for Building Apps | Claude[1]

Author: Lance Martin

Anthropic co-founder Chris Olah once said[2] that generative AI systems like Claude are less “made” and more “cultivated.” Researchers set the conditions that guide their growth, but the exact structures or capabilities that ultimately emerge are often unpredictable.

This presents a challenge for building apps with Claude: developers often use AI wrappers or agent harnesses (referring to external code structures that wrap, control, and assist the AI model’s operation) to compensate for things Claude itself cannot do. These frameworks are built on various “assumptions,” but as Claude’s capabilities evolve, these assumptions quickly become outdated. Therefore, even the experience shared in this article requires you to review and refresh your knowledge regularly.

In this article, we will share three core patterns that teams should adopt when building applications. These patterns help your app keep pace with the evolution of Claude’s intelligence while balancing latency and system costs. The three patterns are: Use what Claude knows, Ask ‘what can I stop doing?’, and Set boundaries carefully.

1. Use what Claude knows

We strongly recommend building your application using tools that Claude is already very familiar with.

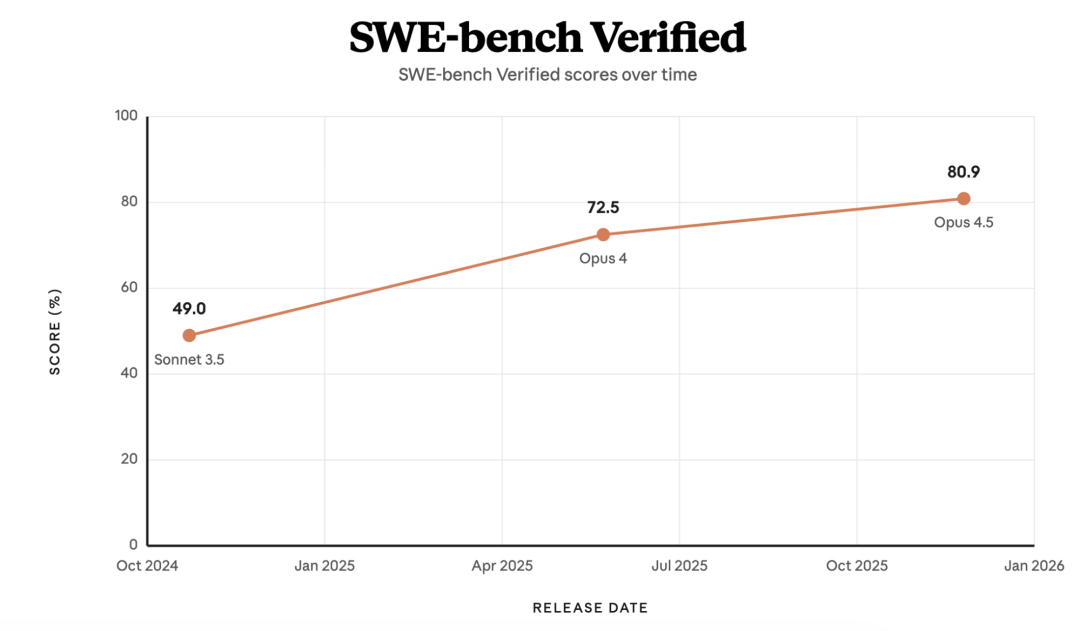

Looking back to late 2024, Claude 3.5 Sonnet achieved a score of 49% on SWE-bench Verified (an authoritative benchmark for evaluating AI’s ability to solve real-world software engineering problems), setting a then-industry record[3]. Surprisingly, it used only a bash tool[4] (a tool that allows AI to execute commands in a computer’s command line) and a text editor tool for viewing, creating, and modifying files[5]. Anthropic’s official programming assistant, Claude Code, is also built on these same tools. Bash[4] was not originally designed for building AI Agents, but it is a tool Claude knows intimately, and its skill in wielding it will only become more refined over time.

The scores of various Claude model versions on the SWE-bench Verified benchmark visually demonstrate its evolutionary path.

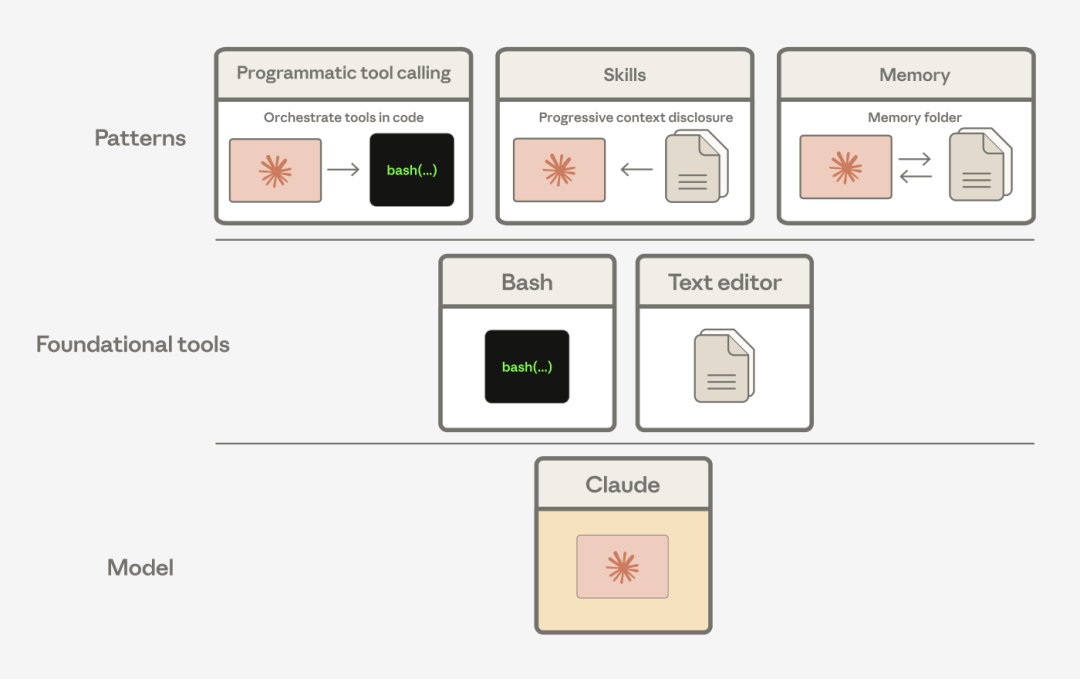

We’ve found that Claude can combine these general-purpose tools into incredibly rich patterns to solve various complex problems. For example, Agent Skills[6], programmatic tool calling[7] and memory tools[8] are essentially derived from combinations of the basic bash and text editor tools.

Programmatic tool calling, skills, and memory tools are actually clever combinations of our bash and text editor tools.

2. Ask ‘what can I stop doing?’

As mentioned, agent harnesses are filled with our biases about Claude’s capability boundaries[9] (thinking it can’t do this or that). As Claude becomes more powerful, it’s time to re-examine these outdated assumptions.

Let Claude orchestrate actions autonomously

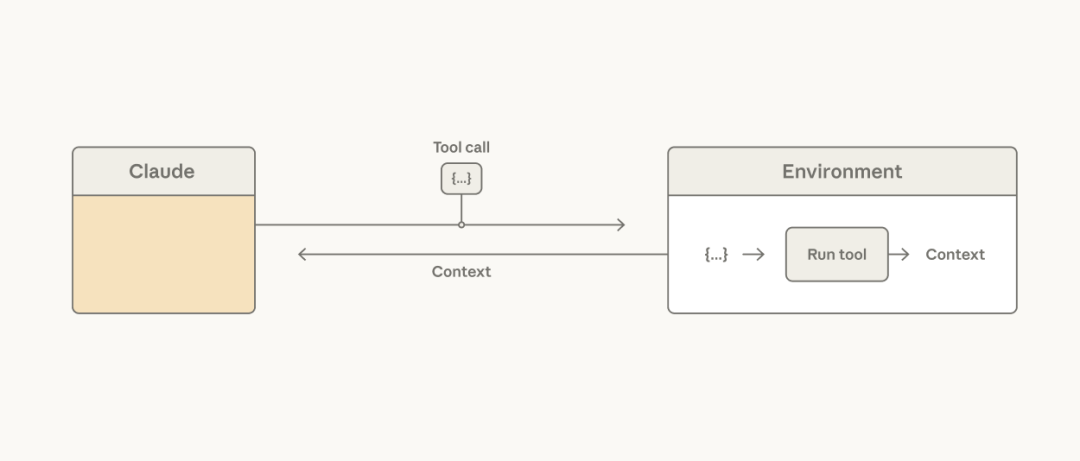

A common mistaken assumption is that the result of every tool call must be immediately stuffed back into Claude’s context window[10] so it can decide what to do next. But in reality, converting all tool results into tokens for the model to process is not only slow and expensive but often completely unnecessary—especially when the result is merely meant to be passed to the next tool, or when Claude only needs a tiny portion of the data.

Claude calls tools, which then execute in a specific environment.

Imagine a scenario: to analyze a specific column in a massive data table, you feed the entire table to the model. The result is the context window being filled with the entire table, and you pay a high token cost for rows Claude doesn’t need at all. While you could add hard-coded filters[11] during tool development to solve this, it’s a band-aid solution. The core problem is: the outer agent harness is making the orchestration decision for the model, when in reality, Claude itself is the best candidate to make that decision.

Simply giving Claude access to a code execution[12] tool (like the bash tool[4] or a REPL for a specific programming language[12]) solves the problem: it allows Claude to write its own code to execute tool calls and handle the data flow logic between these tools itself. Instead of forcing the harness to feed all results into the context, let Claude decide which results to skip entirely, which to filter, or which to pipe directly as input to the next call. The precious context window remains untouched throughout; only the final, streamlined result from the code execution truly enters Claude’s view.